The Lawsuits Are Writing Themselves: How AI Is Flooding Federal Courts

There are moments in legal history when the system evolves through doctrine, and others when it is dragged forward by technology (usually unwillingly). A recent study looking at pro se filings in federal court has found that generative AT has not politely asked for admission to the federal courts; it has simply started filing cases.

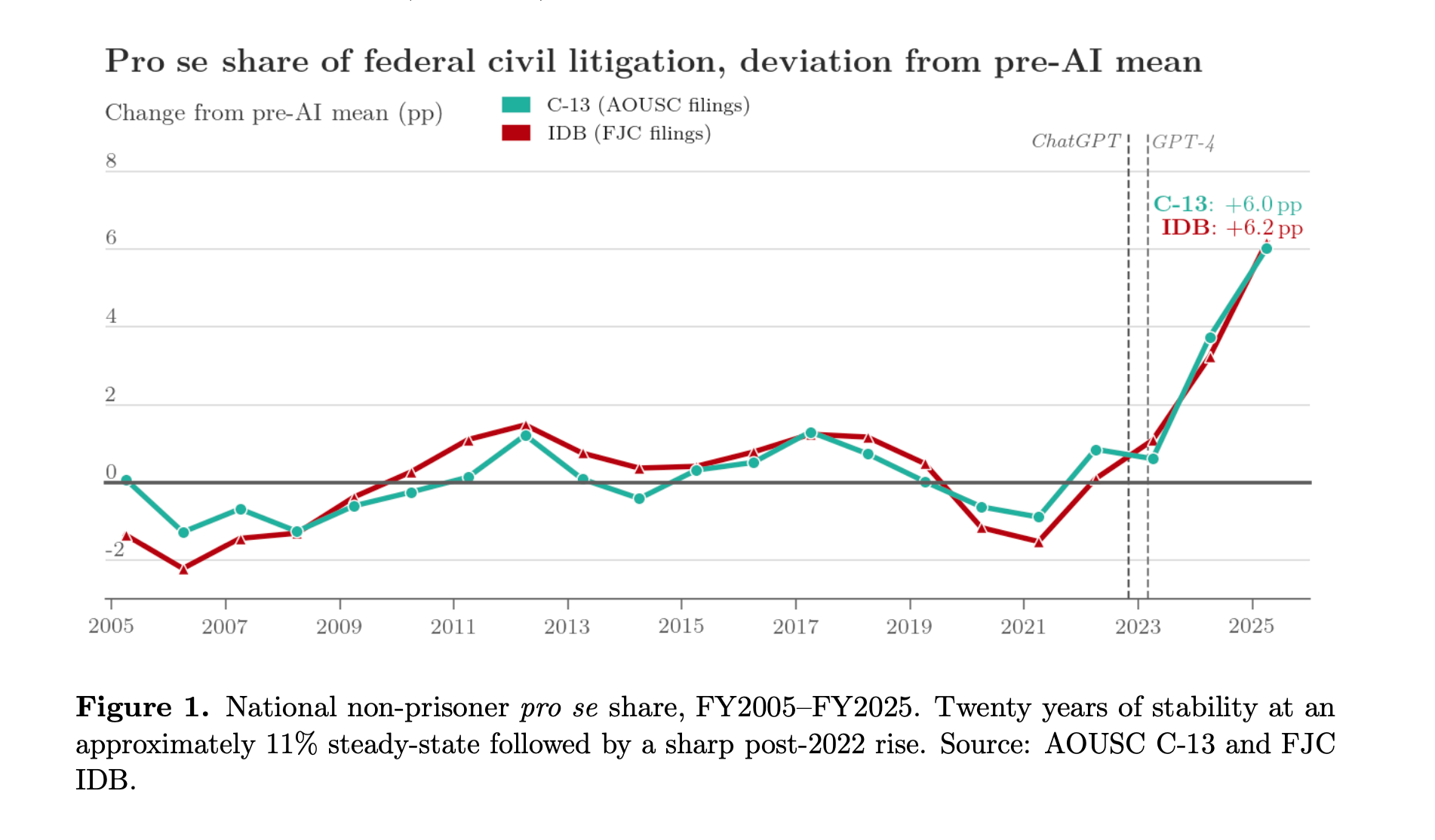

The core finding is stark: after two decades of remarkable stability, pro se litigation in federal civil courts has surged. For roughly twenty years, self-represented litigants accounted for about 11% of filings—a number so consistent it bordered on comforting. Then, beginning in 2023, the line broke. By FY2025, pro se filings reached 16.8%, a shift without precedent in modern federal court data.

This is not a substitution story where litigants are abandoning lawyers en masse. Represented filings remain flat. Instead, this is an expansion story: new entrants are now walking into federal court with pleadings drafted, or at least assisted, by AI. One suspects many of them would not have filed at all five years ago.

And, importantly, they are not filing randomly.

The increase is highly concentrated in what might politely be called “template-friendly” cases: civil rights, consumer credit, foreclosure. In other words, cases where the hardest part is assembling a coherent narrative that satisfies pleading standards. AI is quite good at that. It remains notably absent from more complex, expertise-heavy litigation like patent or securities law.

One might be tempted to frame this as a modest access-to-justice success story. That temptation should be resisted. Defendants don’t choose litigation; plaintiffs do. The rise in plaintiff-side pro se filings suggests AI is lowering the barrier to initiating legal action—not merely coping with it.

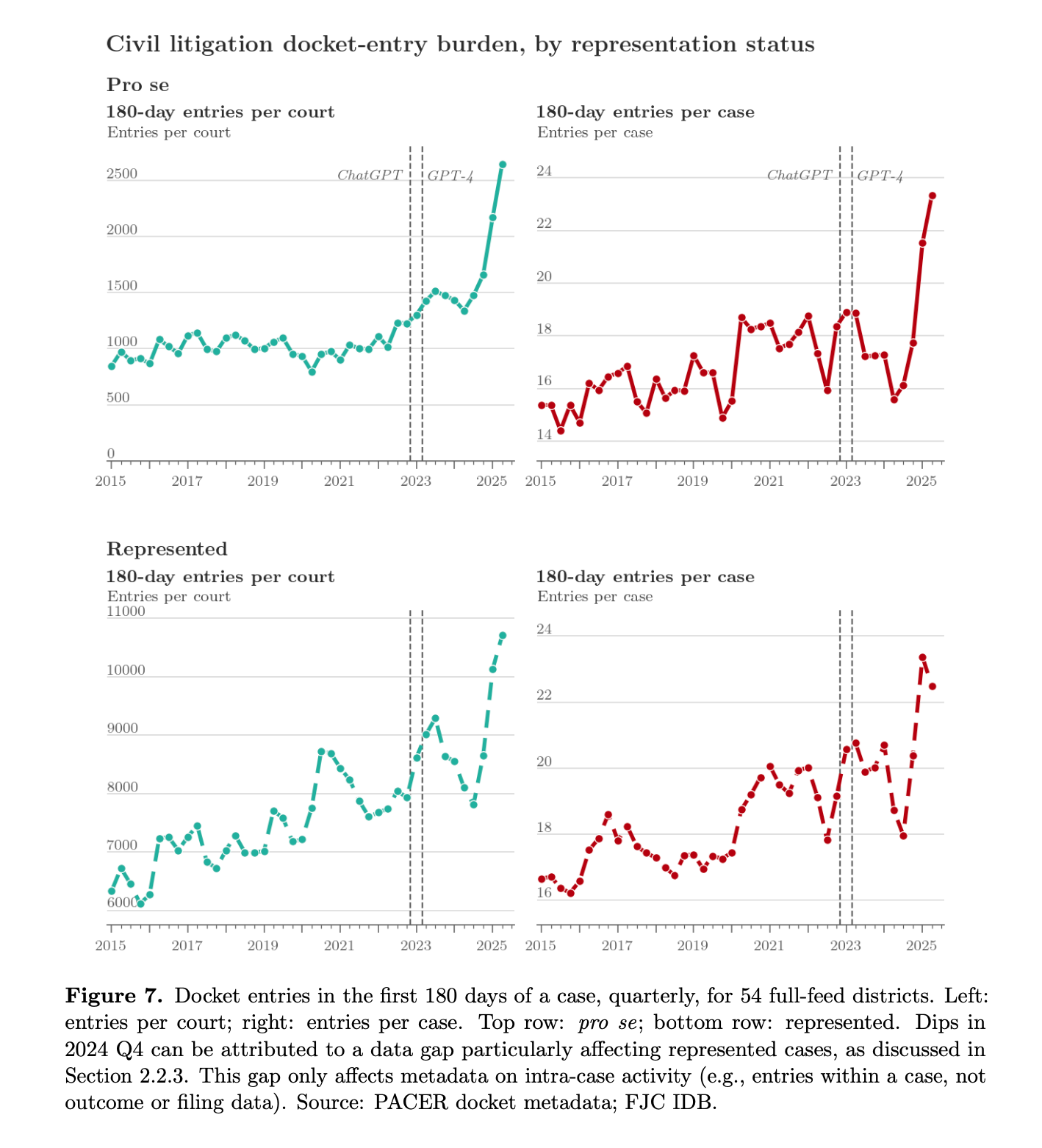

Because while case outcomes and durations remain largely unchanged (courts are not resolving cases faster or slower) the activity within cases has exploded. Docket entries in the first 180 days of a case have increased dramatically, with pro se-generated entries per court up as much as 158% relative to pre-AI baselines.

Translated into plain English: the system is not collapsing, but it is getting noisier. Much noisier.

Each docket entry is not an abstraction; it is a demand on judicial attention. Motions, responses, orders must all be processed and resolved by a human judge who, inconveniently, cannot be scaled like cloud compute. The paper’s most unsettling implication is not that AI is flooding courts with frivolous cases. It’s that it is enabling litigants to sustain engagement with the system: filing more, responding more, persisting longer.

In effect, AI has not made litigation easier to win. It has made it easier to do.

The authors reinforce this with direct evidence: AI-generated text is now visibly embedded in filings. In a sampled dataset of complaints, AI detection rates rise from effectively zero pre-2023 to 18% by early 2026. That is not a rounding error. That is one in five complaints containing machine-generated language.

And that figure is likely conservative.

The geographic spread of this phenomenon further undermines any comforting narrative that this is a localized anomaly. The increase appears in nearly every state, suggesting a broad technological shock rather than policy drift or institutional variation.

The legal system, in other words, is experiencing something rare: a demand shock without a corresponding supply response. Judges cannot be hired quickly. They cannot (yet) use AI to meaningfully increase throughput. And they cannot decline cases.

That leaves us with a familiar legal problem in an unfamiliar form: a system built for scarcity is now confronting abundance.

Two possible futures emerge.

The first is an arms race. As pro se litigants file more, represented parties respond in kind, often using the same tools. The result is escalating procedural activity without corresponding improvements in resolution. Litigation becomes denser, not better.

The second is institutional strain, particularly for repeat defendants like government agencies. When one side’s marginal cost of filing drops to near zero, asymmetry emerges. The government cannot “scale up” its responses in the same way an AI-assisted litigant can generate filings.

Neither scenario is especially reassuring.

The paper offers no policy prescriptions, but the subtext is clear: something has to give. Either courts adopt technological augmentation, triage cases more aggressively, or accept growing congestion as the new normal.

In short: the machines have learned to draft. They have not yet learned to litigate. But they have made it much easier for everyone else to try.